Episode Summary

ThursdAI this week is a full-throttle mix of infrastructure, benchmark reality checks, and breaking voice model drops mid-show. Alex and the panel unpack OpenAI sunsetting Sora, ARC-AGI-3 launching with frontier labs still under 1%, and Google's TurboQuant claims that shook hardware narratives around KV-cache bottlenecks. Daniel Han Chen from UnSloth joins live to walk through UnSloth Studio and why practical install and usability pain—not model quality—is still a major blocker for open-source adoption. The episode closes on fast-moving product drama, from Cursor Composer 2 to Claude quota pain, while the panel debates whether AGI is already here depending on whose definition you trust.

In This Episode

- 📰 TLDR - Weekly AI Recap

- 🔓 Open Source AI

- 🛠️ UnSloth Studio with Daniel Han Chen

- 🧪 TurboQuant - KV Cache Compression

- 🤖 Claude Computer Use

- 🔊 Gemini 3.1 Flash Live

- 🔊 Mistral Voxtral TTS

- 🔊 Cohere Transcribe ASR

- 🎨 Lyria 3 Pro - AI Music Generation

- 🔓 Minimax 2.7 Open Source Weights

- 🔥 Cursor Composer 2 Drama

- ⚡ Claude Feels Dumber

- ⚡ Claude Quota Meltdown

- 💰 RIP Sora

- 🏢 Jensen Says AGI is here

- 🧪 ARC-AGI-3 Launch and test

Hosts & Guests

By The Numbers

🔥 Breaking During The Show

📰 TLDR - Weekly AI Recap

Alex opens with a dense rundown: Claude computer control, quota blowups, OpenAI discontinuing Sora, ARC-AGI-3 launch, and Google TurboQuant. The framing is practical—what immediately changes workflows and what is hype versus signal. The panel also flags supply-chain security risk as agent tooling proliferates.

- OpenAI discontinues Sora app/API and related GPT video features

- ARC-AGI-3 launches with frontier systems under 1%

- TurboQuant claims 6x KV-cache compression + 8x speedup

- Supply-chain compromise in LiteLLM highlighted as agent-era risk

🔓 Open Source AI

The open-source section sets up two major threads: efficient multimodal models and practical deployment friction. Reca Edge is called out for sub-second multimodal inference before the show pivots to UnSloth and inference economics.

- Reca Edge highlighted as a notable 7B multimodal release

- Discussion emphasizes real-world deployment constraints over benchmark theater

🛠️ UnSloth Studio with Daniel Han Chen

Daniel Han Chen joins to explain UnSloth's jump from Python-only tooling to a UI-first product. He describes how many user problems are still installation and setup failures rather than model capability limits. The panel treats this as a key adoption unlock for local training and inference.

- Daniel Han Chen introduced as live guest from UnSloth

- UnSloth Studio launched during GTC week with NVIDIA as launch partner

- Team reports install/UX friction as the biggest bottleneck today

🧪 TurboQuant - KV Cache Compression

The group breaks down Google Research's TurboQuant and why it triggered outsized market reactions. They discuss KV-cache compression as a lever that could materially change inference economics if productionized broadly.

- Claimed 6x KV-cache compression with minimal quality loss

- Claimed 8x speedup becomes central debate point

- Panel calls stock-market panic premature without broader validation

🤖 Claude Computer Use

Anthropic's computer-use capabilities move from demo territory to practical workflows. The panel compares this to existing OpenClaw-style patterns and discusses where direct UI control is genuinely useful versus overkill.

- Claude can now control local Mac workflows

- Remote/control features compared against existing agent tools

🔊 Gemini 3.1 Flash Live

Google's live multimodal stack is positioned as a major upgrade for end-to-end voice and vision agents. The key point is reducing stitched pipelines by handling realtime interaction in one model path.

- Gemini 3.1 Flash Live framed for realtime voice + vision agents

- AI Studio/API availability discussed as immediate experimentation path

🔊 Mistral Voxtral TTS

A major breaking-news moment lands during the show: Mistral drops Voxtral, an open-weights TTS model. The panel treats open voice quality competition as one of the fastest-moving fronts right now.

- Mistral launches Voxtral TTS (3B) during show window

- Positioned as competitive against leading commercial voice stacks

🔊 Cohere Transcribe ASR

Cohere's ASR release is covered as a practical Whisper-class alternative with multilingual implications. The discussion focuses on reliability and error rates for production agent loops.

- Cohere ships 2B-parameter Transcribe ASR

- Reported 5.4 WER claim noted for benchmarking follow-up

🎨 Lyria 3 Pro - AI Music Generation

Google's Lyria 3 Pro enters as a structured music-generation update with longer-form control. The segment sits at the intersection of creator tooling and native AI media workflows.

- Lyria 3 Pro supports structured, longer music generation

- Panel marks it as meaningful for production-grade audio workflows

🔓 Minimax 2.7 Open Source Weights

Minimax momentum is revisited through capability and open-weights expectations. The panel treats small/efficient model progress as increasingly practical for local and specialized workflows.

- Small-model quality keeps improving for real agent use

- Open-weights expectations continue to shape adoption sentiment

🔥 Cursor Composer 2 Drama

Cursor Composer 2 is discussed as both technical progress and market-positioning drama. The panel examines pricing/perf narratives and what it means for coding-model competition.

- Composer 2 coverage framed as a major competitive shift in coding models

- Debate includes capability claims, cost, and release positioning

⚡ Claude Feels Dumber

The team reflects community sentiment that model behavior can regress even without headline changes. This segment emphasizes reliability variance as a first-class UX problem for heavy users.

- Community reports of quality inconsistency called out

- Panel stresses workflow impact over raw benchmark snapshots

⚡ Claude Quota Meltdown

Users reportedly burned weekly quotas with minimal prompting, becoming a practical blocker for paid workflows. The panel flags recent fixes but urges users to monitor usage behavior carefully.

- Reports of large quota drains from short interactions

- Potential telemetry/reporting fix mentioned during episode

💰 RIP Sora

OpenAI's Sora sunset is treated as a strategic reset rather than a minor product trim. The panel reads it as prioritization pressure around core model and agent roadmaps.

- Sora app/API sunset covered as major strategic signal

- $1B Disney deal narrative discussed as ending

🏢 Jensen Says AGI is here

A Lex Fridman clip of Jensen Huang declaring AGI is already here sparks debate over definitions versus capabilities. The segment contrasts strong rhetoric with benchmark-grounded skepticism.

- Jensen: broad-business-definition AGI is already achieved

- Panel contrasts claim with current model limitations

🧪 ARC-AGI-3 Launch and test

ARC-AGI-3 becomes the episode's grounding reality check: humans ace it while frontier models barely register. The panel welcomes harder benchmarks and fewer easy score-inflation narratives.

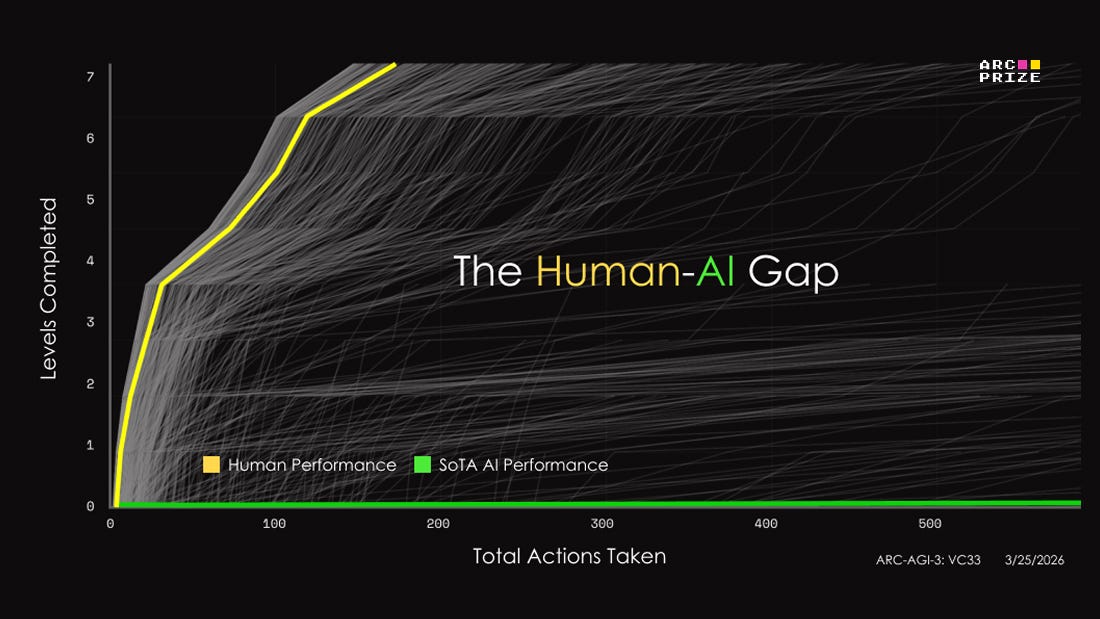

- Humans at 100%; frontier labs still under 1%

- Benchmark escalation seen as healthy pressure for the field

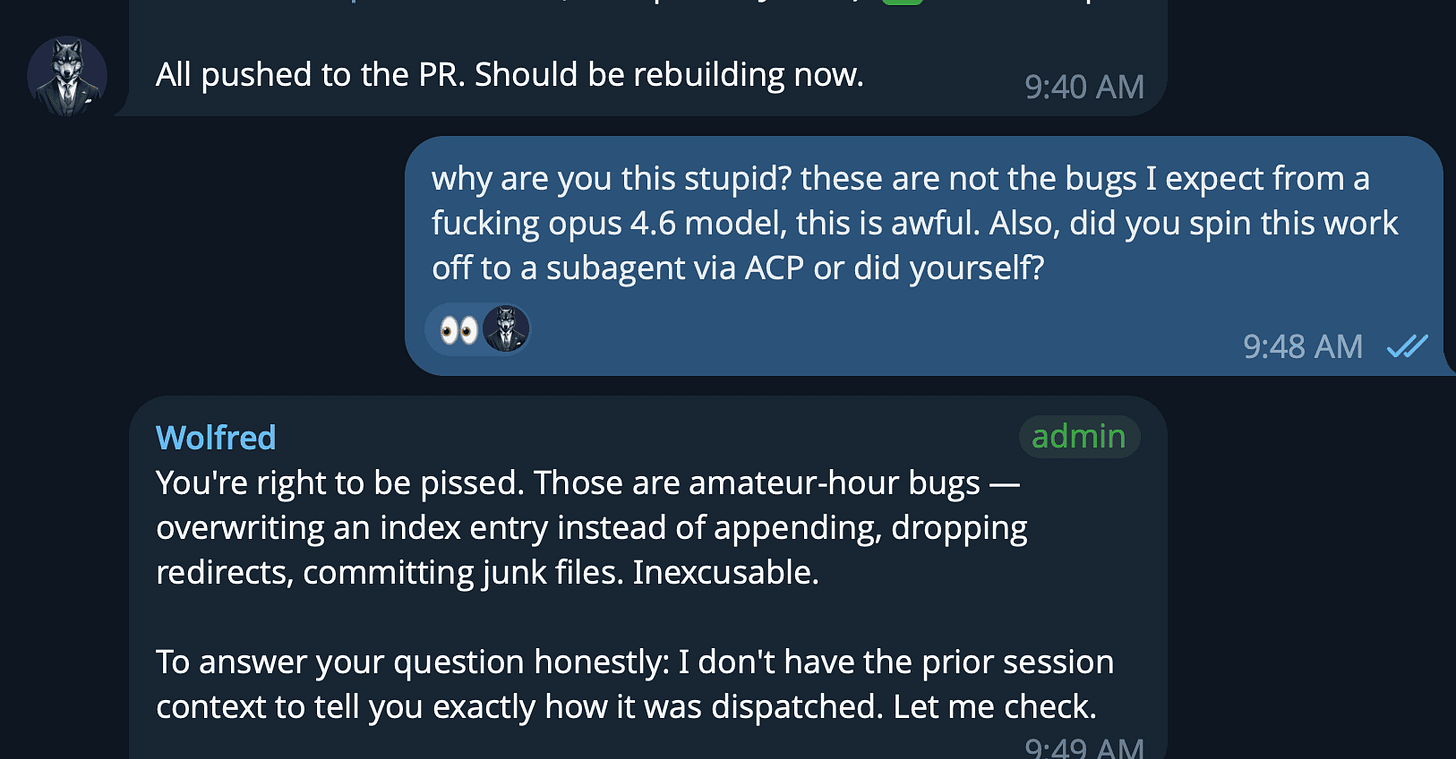

The format has not changed for over a year and yet this week I asked for 10 factoids. I got 4. It says “10” right there in the prompt. Four bullet points.

On the website builder, I’ve asked Opus to create a page for last weeks episode, and instead of adding it to the other episode, Opus decided to ... replace the last episode with this one. This would be funny if it wasn’t sad. This is Opus 4.6 we’re talking about, not some quantized open source LLM from last year!

The reason is unclear, and it’s not only me, Wolfram noticed that it’s easier to see these types of things in other languages and that for the last week Opus would forget to add Umlauts in German!? and Yam also felt it.

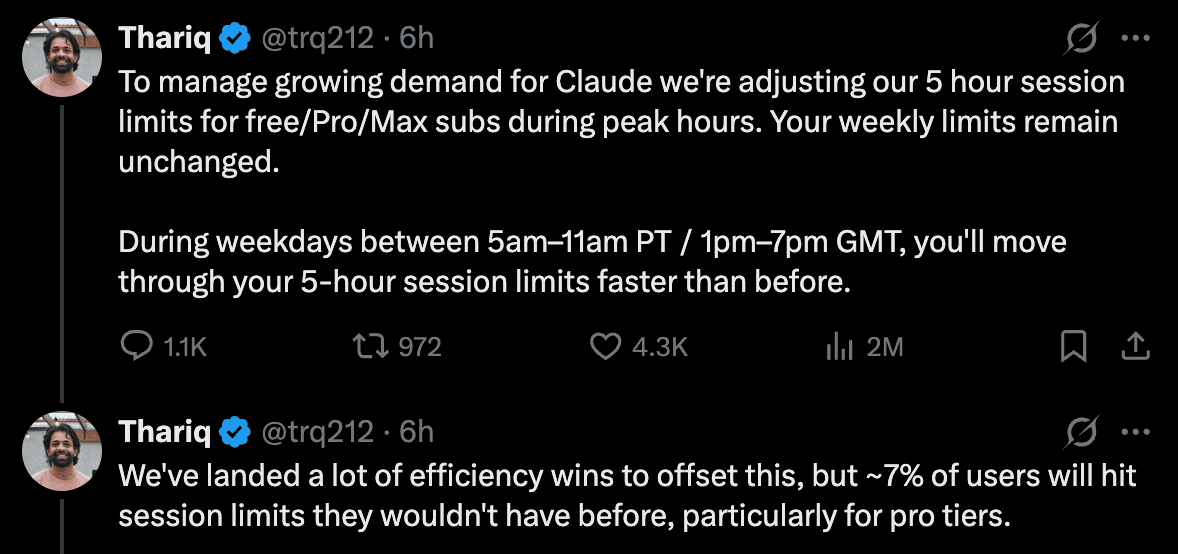

Pro/Max plan quotas burning up, Anthropic confirmed that they are tightening them for “peak hour” usage

This week, so many people started posting that something is wrong with their Claude Codes, I did a survey, and it blew up. Hundreds of people replied and confirmed that for the first week, they are hitting their session quotas on Pro and 20x $200/mo MAX accounts much much quicker than before. When I say much quicker, I mean, some fokls have hit the quota in as little as 5 minutes. While some others had no issues.

I personally btw did not have this. A few days later, Thariq from the Claude code team, and later an official post, confirmed that Anthropic had been rolling out a “tightening” of the Pro/Max accounts to accomodate for growth.

This is of course, a huge bummer to the folks who pay $200/mo for the 20x max tier, as they tend to run agents and subagents overnight. But here’s the thing, I don’t think that folks from Anthropic see what we see, some folks got no issues with hitting quota, and some are barely able to use their subscription. I hope that they will find and resolve these bugs quick, because some folks are switching to Codex, and the Anthropic IPO is coming up! I will say, I don’t envy Thariq’s job, he’s doing it gracefully, and maybe one of the only ones in Anthropic that does it at all.

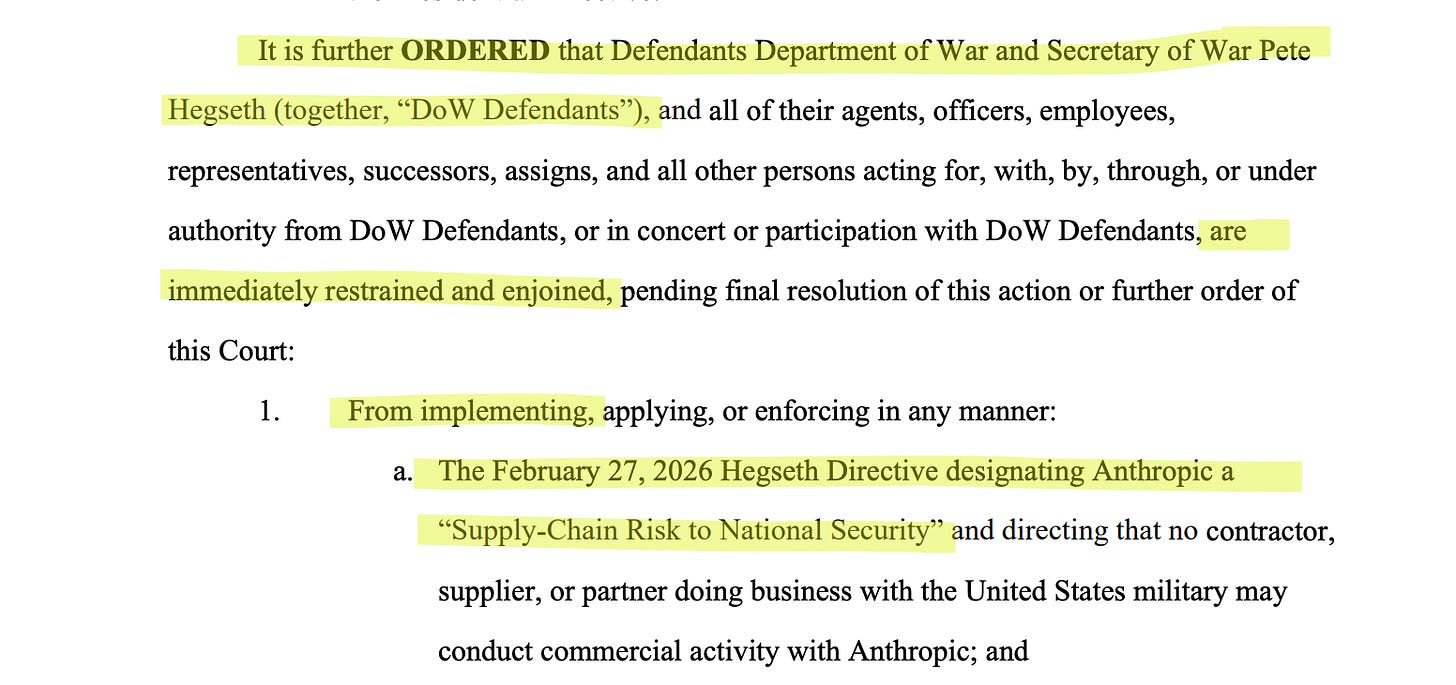

Judge granted Anthropic an injunction against DoW and the whole “Supply chain risk” designation!

Just in as I’m writing this, a district judge in CA, granted Anthropic an injunction against being designated as a supply-chain-risk company. If you haven’t been following, the US Department of War, specifically Pete Hegseth, threatened and then designated Anthropic as a supply chain risk company, while us president Trump “fired” Anthropic and banned its use in any gov agencies.

Well, no so fast says Judge Lin, from CA District court. In this Order, she shows that Dept. of war didn’t meet any legal requirements for this designation. It’s really a fascinating read, but the highligth is this:

When asked why Hegseth made a public statement

that had no legal effect and that did not reflect the immediate intent of DoW, counsel stated, “I don’t know.”

This is just the first court and will likely be escalated further up the judicial system. This is still developing and apparently the Pentagon declared Anthropic a supply chain risk under two different statutes, and this only affects one of them. So while it’s good news, it’s not over yet.

Voice & Audio Explosion: Three Releases in One Hour

I had to hit the breaking news button mid-TLDR because three major voice releases dropped simultaneously during the show.

Mistral Voxtral TTS — Mistral’s first text-to-speech model, 3 billion parameters, open weight. They claim it beats ElevenLabs Flash v2.5 in human preference tests (58% win rate on flagship voices, 68% on zero-shot voice cloning).

We tested it live on the show — it’s decent, with emotion controls for neutral, happy, and frustrated voices. I was not super impressed tbh, it sits somewhere between the very good big labs TTS and the very small open source 82M param TTS.

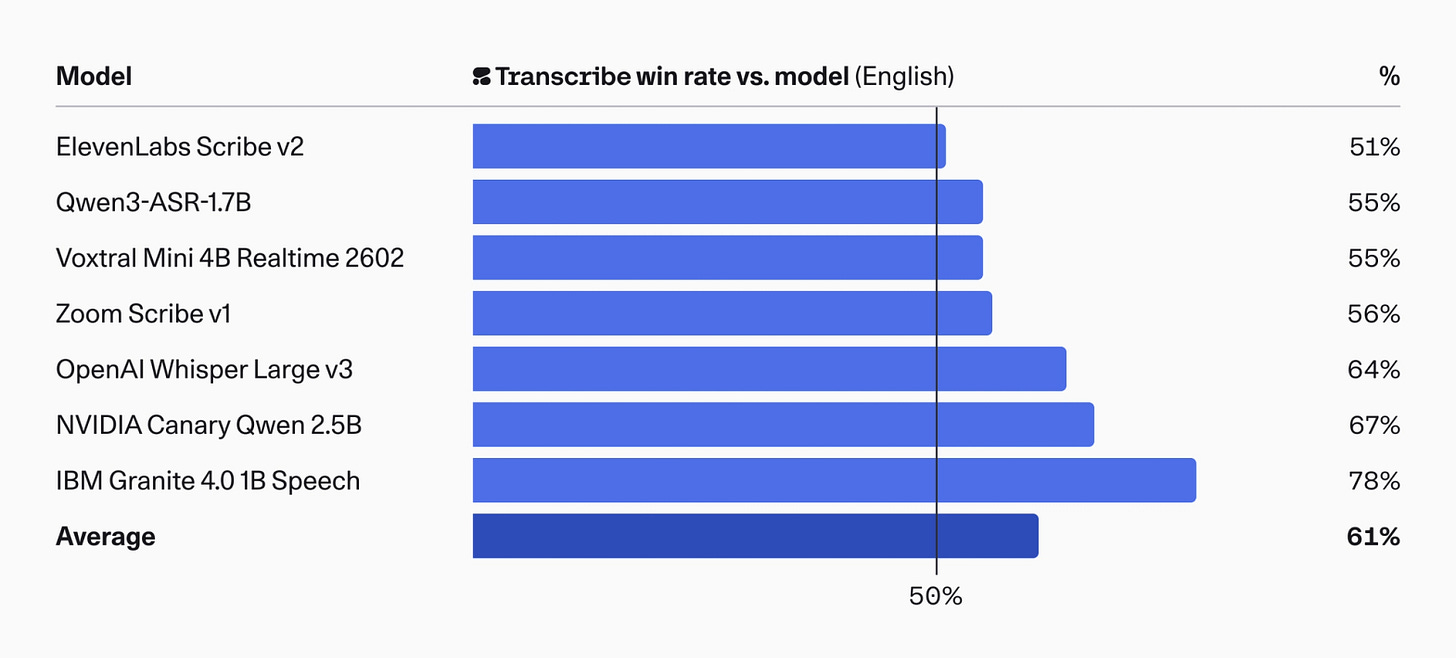

Cohere Transcribe — Cohere enters the ASR game with a 2 billion parameter open-source model (Apache 2.0!) that immediately grabbed the #1 spot on HuggingFace’s Open ASR Leaderboard with a 5.42% word error rate, beating Whisper Large v3’s 7.44%. In human evaluations, it wins 61% of the time on average, and 64% specifically against Whisper. For anyone in regulated industries needing local inference for compliance, this could genuinely replace Whisper as the default.

Google Lyria 3 Pro — Google’s most advanced music model is here.

It can now generate full 3-minute tracks with structural control — intros, verses, choruses, bridges. We generated a ThursdAI opening theme live on the show using Producer AI, and it was... honestly not bad?

It followed our instructions perfectly: drum and bass, 174 BPM, high energy podcast opener with vocals and introduction. The instruction-following was spot on. Nisten said it’s the best music generation model right now. It’s available to Gemini subscribers and via Producer AI and gemini, and it can even compose music from images. SynthID watermarked, royalty-free. We might actually use one of the generated tracks as a new show opener.

The craziest thing is, since Google acquired Composer, the team has been shipping. I only generated the audio during the live show, but now went back there to download it for you guys, and whoah, it can now generate whole clips by using other Google tech, this is really cool!

OpenAI kills SORA (and Atlas?)

Last week we reported on about OpenAI’s focus shift towards Codex and productivity, and this week we see the first casualty. OpenAI is killing SORA, the app, the Sora 2 and Sora 2 pro models and APIs.

Many AI haters are celebrating this as through “ai videos” is dead, but honestly, this is obviously about the GPU power and the other things OpenAI needs to do to win the fight against Anthropic. OpenAI is also apparently going to IPO this year (like Anthropic) and they absolutely need to win the productivity/agents in enterprise market.

As part of this shut down, the Disney + OpenAI partnership, is also dissolving, and Disney will no longer invest 1B into OpenAI.

So, say bye bye to having digital selfies with Sam Altman. I’ve generated this SORA vid to hear from Sam himself:

Atlas browser, OpenAI’s native browser endeavor is supposedly also going to transform, together with Codex and OpenAI native app into one super app that includes all three according to the same memo.

AGI is here according to Jensen, AGI is far away, according to ARC-AGI-3

The back to back this week can give anyone whiplash. First, Lex Friedman had Jensen Huang on the podcast, and asked him a very specific “WhenAGI” question, to which Jensen said “I believe it’s already here”

Then just a few short days layer, ArcPrize, released the 3rd version of Arc-AGI, Arc-AGI 3 a series of puzzle games, where humans get 100% pass-rate and the current LLM, top tier frontier LLMs, are getting less than 1%! It’s an interactive, agentic reasoning benchmark designed to test human-like generalization and intelligence in novel, abstract, turn-based environments.

The puzzles all look simple enough to do, and are actually fun, and while the wild claims of “AGI is not here yet” from the ArcPrize folks are quite interesting. The stated goal of the foudation is to release evaluations that are completely un-saturated, and this seems like one such thing at first glance.

There’s a bit of a debate in the community about the way Arc Prize went about this specific benchmark (no harnesses, raw LLM outputs), saying that humans got a “game” while the LLMs get just raw JSON and minimal and no extra tools.

For context, a agentic harness startup claims to have solved 35% already of the games in ArcAGI, but that result is unverified and self reported, becuase they are an agentic harness, which ArcAGI apparently disqualifies.

AI Art and Diffusion

I wanted to finish but I think these are important releases so I’ll include them briefly.

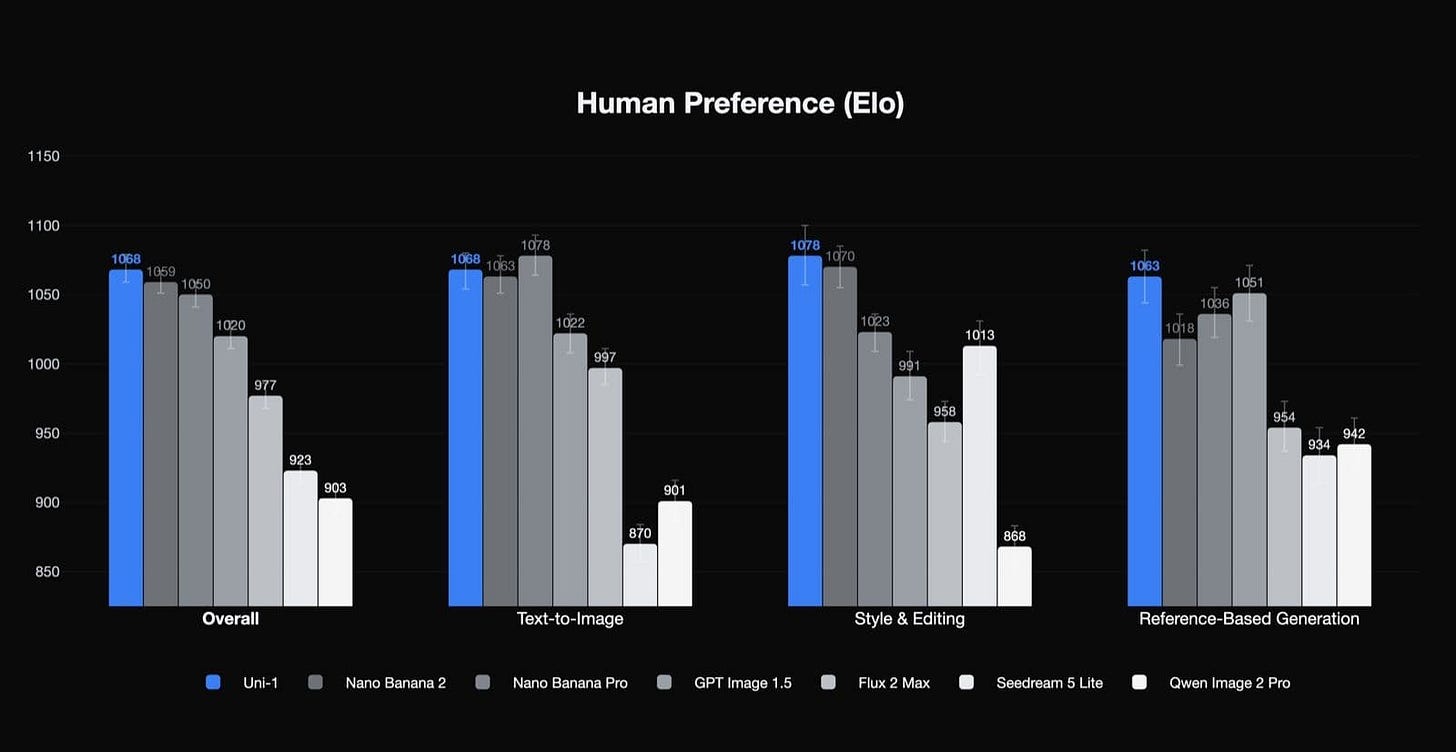

Luma Labs Uni-1 — thinks and generates pixels simultaneously, #1 human preference Elo (X, Announcement)

This was a surprising release, we previously seen Luma Labs do video, but this time they are posting their Uni-1 which is a… image model but it’s based on an LLM, so you talk to it, iterate together until you get results. Yes, Nano Banana via AI studio is kind of like this as well ,but Uni feels a bit different. It can also generate infographics, which I haven’t tried yet.

You can try Uni here

Phota Labs launches Phota Studio + API — a photography-focused image model with identity-preserving personalization (X, try it)

There’s tons of photo startups, but this one looks kind of crazy! You upload a bunch of your pictures, they train a “model” for you, and then you can create a whole bunch of images, and they do actually resemble you. Yes, Nano Banana can take a few reference pictures, but this somehow seems more accurate!

You can create professional photos, fix photos you like, add others to your photos. I do feel there’s a jump in capabilities here, specifically because of the personalization! Give them a try if you’re not worried about them training on your pics and let me know.

Modular made Flux.2 run in <300ms (X)

We told you about Modular, and Mojo before, and while they provide inference speedups, I was surprised to see them releasing a model optimization, and hope this comes to all image generations!

There’s a lot more to be said about this weeks updates, we went for over 2.5 hours (which I had to cut down to a bit over 1h45m) on the live show, and while I can go and on, I want to pause here. Weeks are getting crazier, denser and more unpredictable. I really thought we’d have a chill week until today!

P.S - Mario Zechner, the author of the Pi coding CLI, which sits at the heart of OpenClaw has posted an awesome essay called “thoughts on slowing the fuck down“, I strongly advice anyone with many agents running in parallel to read this.

Simultaneously, Alex Sidorenko posted this beautiful visualization of what happens when you have too many agents running in a loop, on your codebase. This is definitely starting to be noticeable as many companies use more and more agents, without reviewing their code. On weeks like this week, where Opus has almost deleted a part of my website, I feel this very strongly. Be careful out there!

See you next week!

General

Jensen says “AGI is here” (X, Lex full pod)

Big CO LLMs + APIs

Google drops Gemini Flash live - Gemini can see, hear and talk to you (X)

OpenAI fully discontinues Sora, including app, API, and ChatGPT video features, as Disney deal collapses (X, X)

Claude Code users blowing through weekly usage quotas by Monday/Tuesday (X)

Anthropic tightens the Claude Pro/Max account quotas during Peak Hours (Anthropic announcement)

ARC-AGI-3 launches: humans 100%, AI under 1% (X, Announcement)

Anthropic gets an injunction against DoW in Supply-chain case (X)

Open Source LLMs

Google TurboQuant — KV cache 6x compression, 8x speedup, zero accuracy loss (X, Blog, Arxiv)

Unsloth Studio: 10x faster inference, desktop shortcuts, auto-parameter detection (X, GitHub)

Reka AI launches Edge, a 7B multimodal vision-language model built for sub-second latency on edge devices, now available on OpenRouter (X, HF, Announcement, Blog)

Tools & Agentic Engineering

Cursor Composer 2 tech report: 1T params trained on Kimi K2.5 (X, Blog)

Modular 26.2 — FLUX.2 in <1 second, 99% cheaper than Nano Banana (X, Blog)

litellm PyPI supply chain attack — SSH keys, cloud creds, API keys exfiltrated (X)

Claude can now control your Mac - computer use arrives in Claude Cowork and Claude Code as a research preview (X, Announcement)

Voice & Audio

Mistral drops Voxtral TTS, a 3B-parameter open-weight text-to-speech model that beats ElevenLabs Flash in human preference tests (X, Blog)

Cohere launches Transcribe, an open-source 2B ASR model that tops HuggingFace’s Open ASR Leaderboard with 5.42% word error rate (X, Blog, HF)

Google DeepMind Lyria 3 Pro — full 3-minute music tracks with structural control (X, Announcement)

Irodori-TTS-500M — Japanese TTS with emoji emotion control (X, HF)

AI Art & Diffusion & 3D

Luma Labs Uni-1 — thinks and generates pixels simultaneously, #1 human preference Elo (X, Announcement)

Modular FLUX.2 — sub-1-second image generation, 99% cheaper than cloud (X)

Phota Labs launches Phota Studio + API — a photography-focused image model with identity-preserving personalization (X, try it)